I integrated LLMs into existing workflows without rewriting everything from scratch

Integrating LLMs into your existing workflows doesn't require a full rewrite. With the right tools, you can embed powerful AI capabilities while keeping your current systems intact.

Integrating large language models into existing workflows can be done without a full rewrite. Below are the top tools that let you plug LLMs into your processes quickly and efficiently, ranked by their relevance and ease of use.

LLM Prompt Saver shines because it turns a simple browser extension into a powerful prompt repository. With a single click you can store, edit and reuse prompts for dozens of LLMs, keeping your workflow in one place. Seamless prompt management makes it easy to integrate into any existing system.

Ideal for individual developers or teams that want instant prompt access across multiple platforms. LLM Prompt Saver

LangTale provides a unified space for prompt collaboration, letting teams work together on the same prompt base. The intuitive UI and real‑time editing help prevent silos and accelerate iteration. Collaborative prompt editing is the core advantage here.

Best suited for remote teams needing a single source of truth for model interactions. LangTale

LLime builds custom AI assistants tailored to business workflows, integrating them into your existing tech stack without extensive code changes. It continuously learns from feedback, improving performance over time. Continually improving assistants keep your use case fresh and effective.

Designed for any company looking to embed an AI helper that grows with user data. LLime

ReLLM adds permission‑sensitive, long‑term context to your LLM-powered applications, enabling continuous conversation threads without manual state handling. By controlling the scope of memory, it preserves privacy while boosting relevance. Long‑term context is the critical feature that differentiates it.

Perfect for compliance‑heavy environments needing granular data retention controls. ReLLM

LiteLLM serves as a unified gateway that lets you route requests to over a hundred LLMs in the familiar OpenAI format, eliminating vendor lock‑in. Its lightweight API layer makes it quick to switch providers without touching your code. Universal LLM gateway grants you elasticity.

Best for developers needing fast experimentation across multiple models. LiteLLM

LM Studio lets you experiment with Hugging Face’s open‑source LLMs locally, sidestepping cloud costs and latency issues. Its command‑line and GUI support both beginners and power users, simplifying the learning curve. Local LLM experiments keep data on‑premises.

Great for researchers and developers wanting full control over model behavior. LM Studio

Semantic Kernel provides a developer‑friendly framework to embed advanced LLM capabilities into any application, from microservices to desktop apps. It abstracts away the complexities of prompt engineering, letting you focus on business logic. Enterprise‑grade integration is the sauce that speeds adoption.

Suited for Microsoft‑centric stacks looking to add AI features without reinventing the wheel. Semantic Kernel

AI Docs automates document‑centric tasks using LLMs and offers APIs, Telegram, and WhatsApp bot integrations, turning routine content handling into a single workflow. Its template system accelerates onboarding for new documents. Multi‑channel automation keeps communication consistent.

Ideal for support teams and content creators who need quick answers across platforms. AI Docs

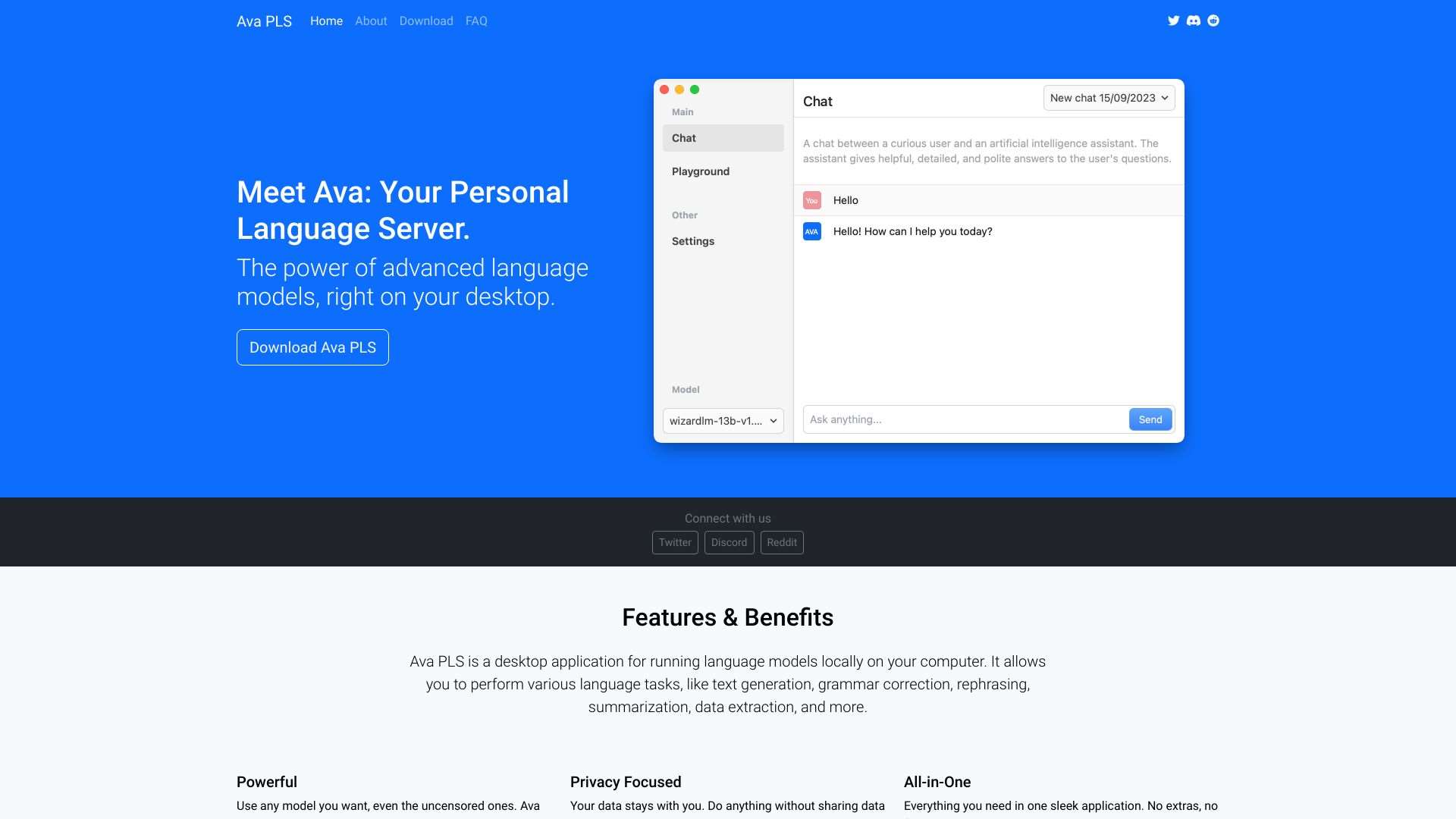

Ava PLS brings large language models to the desktop, offering an intuitive GUI so you can run powerful LLMs offline without cloud dependencies. It eases the migration path for teams wary of data privacy concerns. Desktop LLM powerhouse yields immediate results.

Especially useful for data‑sensitive industries that require local inference. Ava PLS

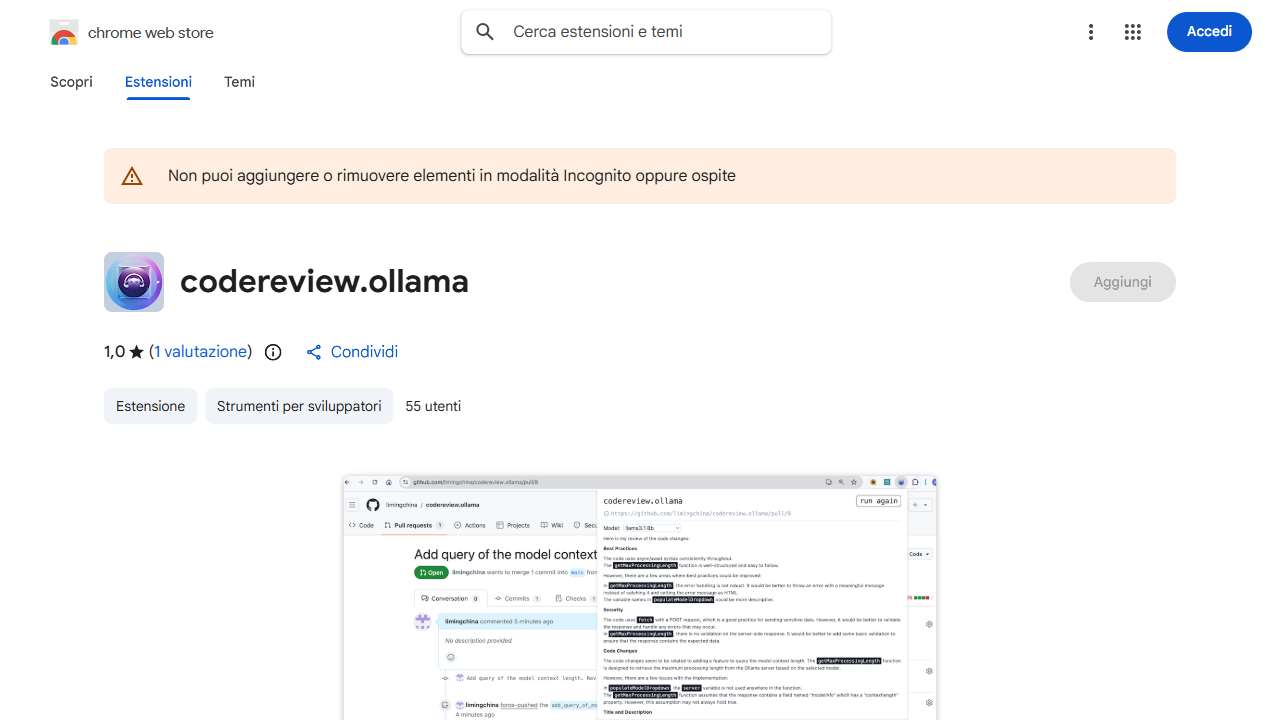

Automated code review on GitHub and GitLab is made effortless with this LLM‑powered Chrome extension that uses Ollama to analyze changes and suggest improvements. Integrating it into CI pipelines means every PR gets instant, AI‑driven feedback. Intelligent code reviews elevate code quality.

Best for development teams that want to reduce manual review workload and catch bugs faster. Review Pull Requests on github or Merge Requests on gitlab with Ollama using LLMs

By using these tools, you can embed LLM intelligence into your current systems with minimal code changes, ensuring a smooth transition toward smarter workflows.